|

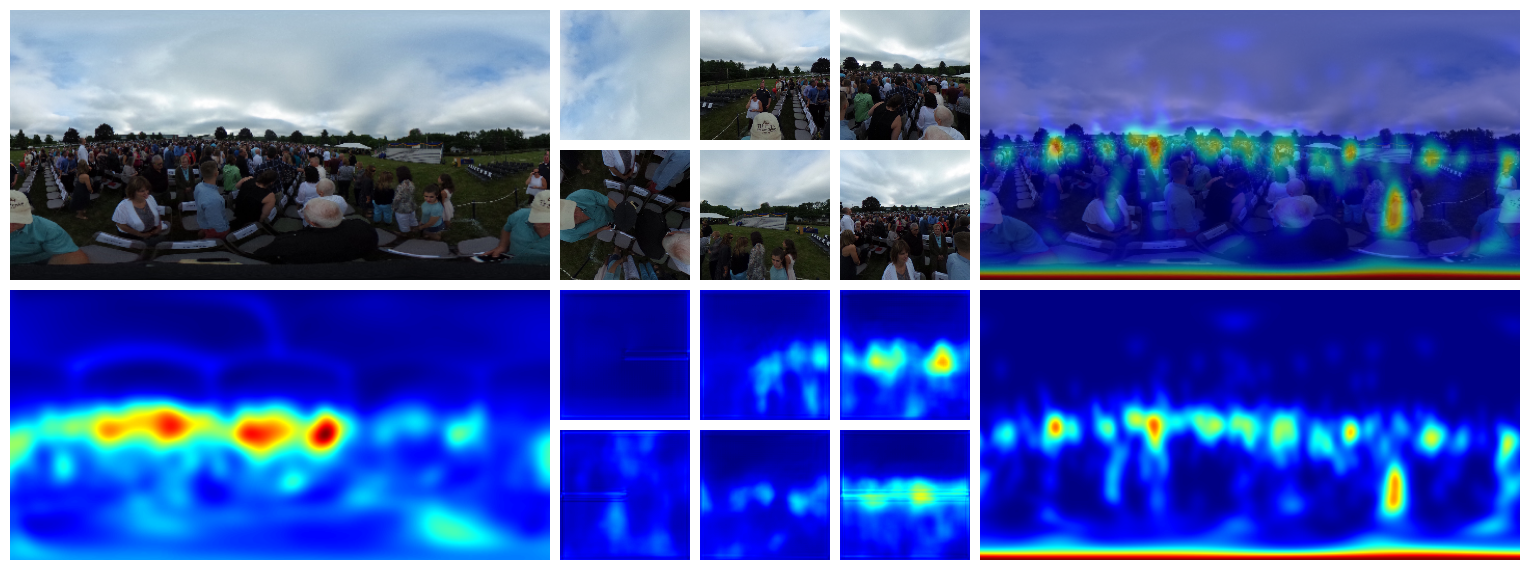

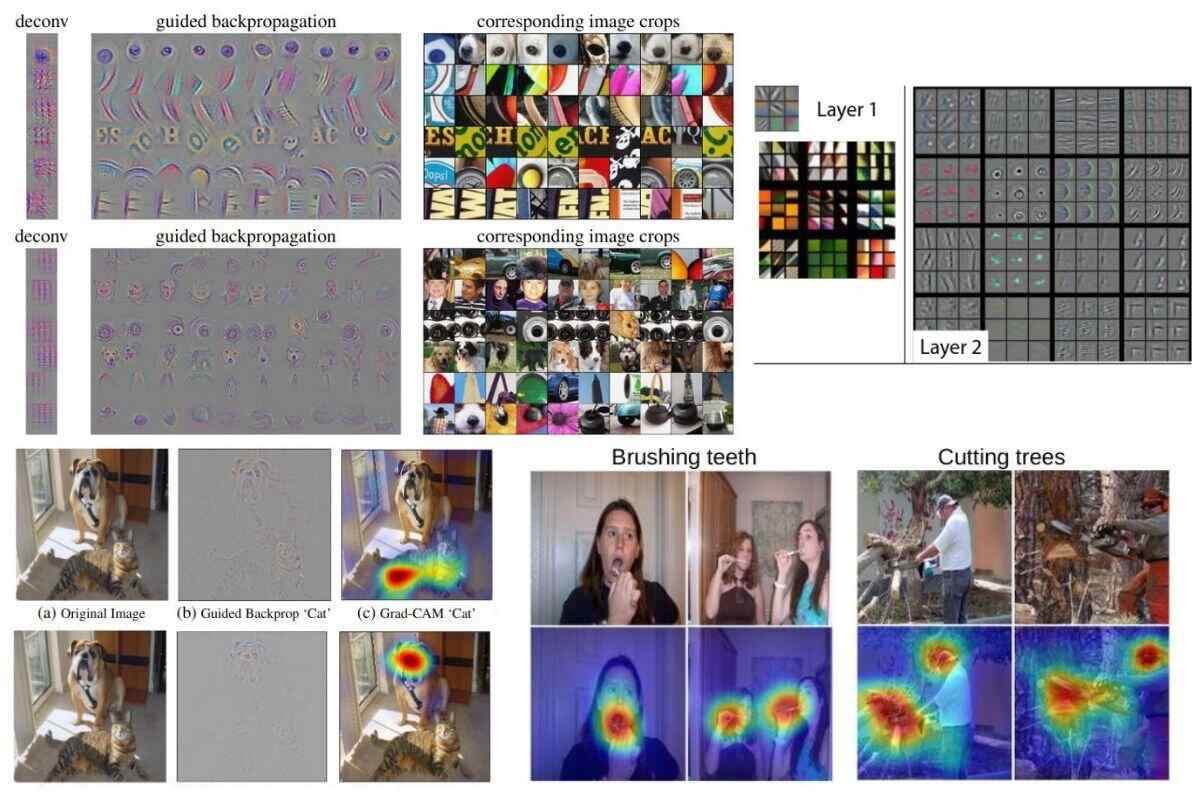

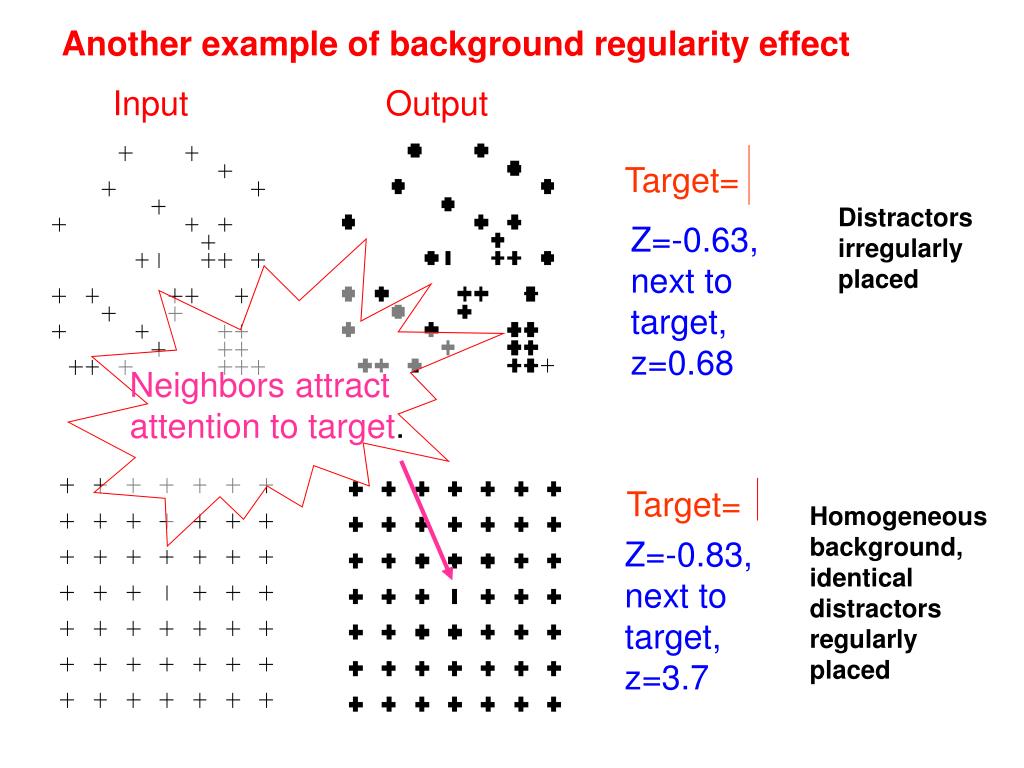

Finally, we give general remarks about the That quantify the sparsity and the calibration of explanation methods, two To complement DAUC/IAUC, we propose new metrics without changing the DAUC and IAUCĪnd ignore the score values. That one can drastically change the visual appearance of an explanation map

Lead to an unreliable behavior of the model being explained. Presented with images that are out of the training distribution which might Secondly, we argue that during the computation of DAUC and IAUC, the model is This shows that these metricsĪre insufficient by themselves, as the visual appearance of a saliency map canĬhange significantly without the ranking of the scores being modified. The ranking of the scores is taken into account. The actual saliency score values given by the saliency map are ignored as only

Maps generated by generic methods such as Grad-CAM or RISE. These metrics were designed to evaluate the faithfulness of saliency

(DAUC) and Insertion Area Under Curve (IAUC) metrics proposed by Petsiuk et al. In this paper, we critically analyze the Deletion Area Under Curve Of user studies, metrics are necessary to compare and evaluate these different Due to the black-box nature of deep learning models, there is a recentĭevelopment of solutions for visual explanations of CNNs.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed